Season's Greetings

Season's Greetings

edited by S. Kaiser, A.R. Gottu Mukkula, T. Ebrahim and Prof. S. Engell

Season's Greetings

Dear co-workers, project partners, colleagues, friends, and former members of the dyn and pas groups!

We hope that this message reaches you in good health and good spirits, despite the risks and restrictions of another Corona winter! Sometimes one would wish that every decision maker had to take (and pass!) a compulsory short course in fundamentals of feedback control, just some basics: What is runaway, why it is better to use indicators which react fast rather than indicators that provide information weeks later when the problems have accumulated, and that when controlling a system the dynamics of which you do not fully understand, you better use small probing moves and react fast to the outcomes than doing bang-bang control...

Looking back on 2021, we believe that our groups managed the fully online semesters very well with good outcomes, thanks to the enormous efforts of all group members. The only issue for which we did not come up with solutions that were received favourably by all was how to offer online exams assuring fairness, but also flexibility.

This semester we switched back to classroom teaching, at least until now, which the students seem to enjoy very much. We have been using all channels of communication from the start of the semester, live lectures, live broadcasting via Zoom, and providing the recordings for asynchronous and multiple use. We believe in the maturity of the students to use these opportunities in the way that fits them best. After the experience of the online semesters, the interaction in the lectures so far was livelier than ever, and certainly our teaching materials also have improved.

With the new lecture “Machine Learning Methods for Engineers” the PAS group introduced a new course entirely dedicated to machine learning which has been very well received by students.

With the new lecture “Machine Learning Methods for Engineers” the PAS group introduced a new course entirely dedicated to machine learning which has been very well received by students.

Regarding research, the DFG Transregio InPROMPT will end in mid-2022 after 12.5 successful years. The achievements are collected in an impressive book. The KEEN project continues to provide a stimulating collective learning experience about the potential and limitations of machine learning when applied to real-world examples and data, both for the partners from industry and academia, and has already triggered interesting methodological work.

In the new EU project Circular Foam that started in October 2021, our groups will contribute to the development of large-scale chemical recycling solutions for polyurethane foam from refrigerators and construction waste, in particular to system-wide modelling, simulation and optimization.

The pas group successfully applied for funding in the framework of a new Priority Program (SPP) of DFG, dealing with machine learning in chemical engineering. The project will explore new ideas to achieve safe reinforcement learning for the optimal startup and operation of complex chemical processes.

Just today, we got the news that the successor project of SIMPLIFY, SIMPLI-DEMO will receive funding from the EU for 4 years, providing a jump-start for the PAS group in the application domain of the production of particles and highly viscous materials.

You find a lot more information on research, people, publications etc. on our Seasons’s Greetings web page.

Unfortunately, due to the pandemic, we were not able to plan a live event in 2021, we hope for an opportunity where we can meet many of you in person in 2022!

We would like to thank all group members, project partners and colleagues for the pleasant and rewarding collaborations in 2021, and we wish you enjoyable holidays and a successful and happy year 2022 in good health!

Sebastian Engell and Sergio Lucia

EU-Project Circular Foam Started

Closing the materials cycle for rigid polyurethane foams: This is the ambitious goal of the new pan-European "CIRCULAR FOAM" project.

The EU-funded lighthouse project brings together 22 partners from 9 countries from industry, academia and society. Within four years,

the consortium will jointly establish a complete circular value chain for raw materials for rigid polyurethane foams used as insulation material in refrigerators and the construction industry.

Once implemented across Europe, the system could help to save 1 million tons of waste, 2.9 million tons of CO2 emissions and 150 million euros in incineration costs annually, starting in 2040.

Closing the materials cycle for rigid polyurethane foams: This is the ambitious goal of the new pan-European "CIRCULAR FOAM" project.

The EU-funded lighthouse project brings together 22 partners from 9 countries from industry, academia and society. Within four years,

the consortium will jointly establish a complete circular value chain for raw materials for rigid polyurethane foams used as insulation material in refrigerators and the construction industry.

Once implemented across Europe, the system could help to save 1 million tons of waste, 2.9 million tons of CO2 emissions and 150 million euros in incineration costs annually, starting in 2040.

The CIRCULAR FOAM project aims at bringing multiple improvements to the existing material cycle and building a new sustainable circular e

cosystem for rigid polyurethane foam. Besides the development of two novel chemical recycling routes for end-of-life materials,

waste collection systems and dismantling and sorting solutions and logistic solutions will be set-up and demonstrated.

The different elements will be combined into an optimized systemic solution, based on integrated system modelling and simulation.

The CIRCULAR FOAM project aims at bringing multiple improvements to the existing material cycle and building a new sustainable circular e

cosystem for rigid polyurethane foam. Besides the development of two novel chemical recycling routes for end-of-life materials,

waste collection systems and dismantling and sorting solutions and logistic solutions will be set-up and demonstrated.

The different elements will be combined into an optimized systemic solution, based on integrated system modelling and simulation.

In CIRCULAR FOAM, we will develop an integrated system-wide modelling, simulation and optimization framework into which models of the different elements, collection and separation of waste, logistics, chemical processing and separation and feed-in into industrial sites can be embedded. We will also contribute to the design of the chemical processing and separation steps, in particular with respect to robustness and flexibility in terms of the throughput and the composition of the feed streams.

More information: www.circular-foam.euInPROMPT Project

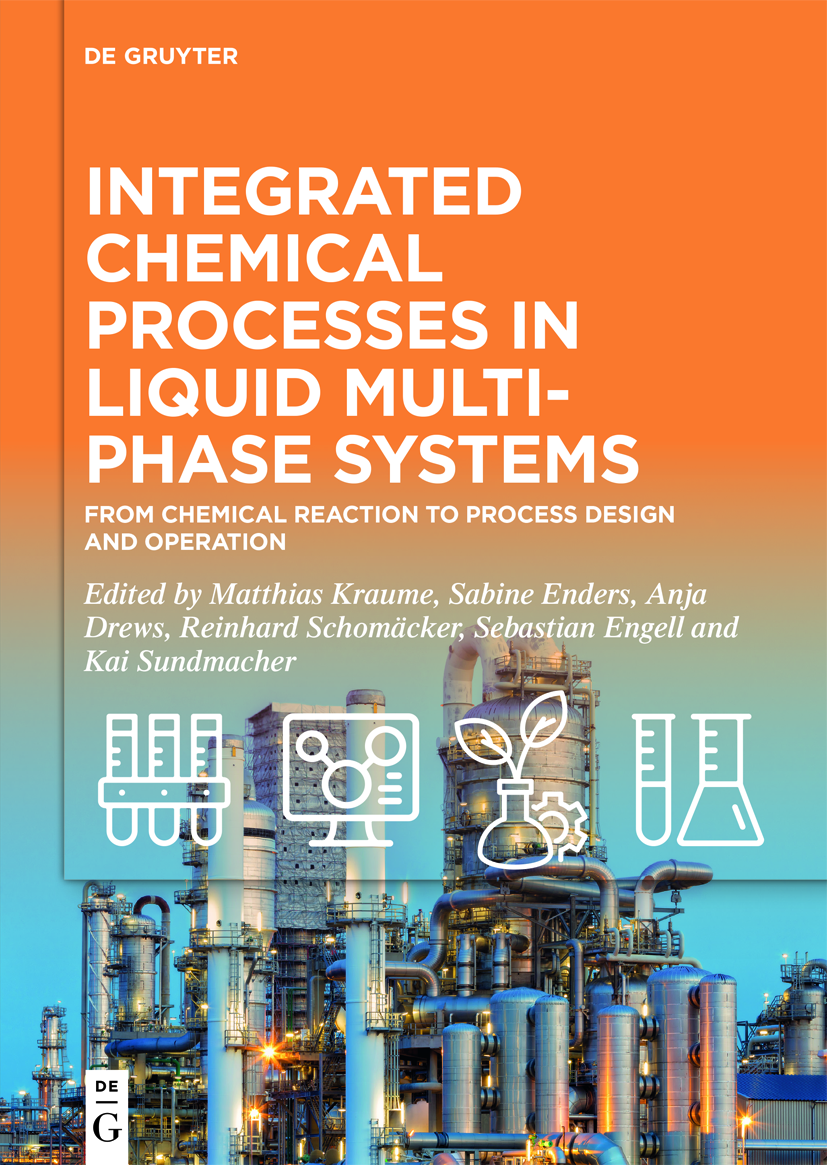

After more than 12 years, the SFB Transreagio 63 ”Integrated Chemical Processes in Liquid Multiphase Systems”. will end in 2022.

The Transregio currently consists of 14 research projects with researchers from TU Berlin, TU Dortmund, OVGU Magdeburg, HTW Berlin, TU Darmstadt, KIT, MPI Magdeburg and HS Anhalt.

The goal is understand and develop processes based on reactive multiphase system.

All levels of the process, from the molecular level to the design and operation of the processes in miniplants are considered.

After more than 12 years, the SFB Transreagio 63 ”Integrated Chemical Processes in Liquid Multiphase Systems”. will end in 2022.

The Transregio currently consists of 14 research projects with researchers from TU Berlin, TU Dortmund, OVGU Magdeburg, HTW Berlin, TU Darmstadt, KIT, MPI Magdeburg and HS Anhalt.

The goal is understand and develop processes based on reactive multiphase system.

All levels of the process, from the molecular level to the design and operation of the processes in miniplants are considered.

The results of the research projects within InPROMPT will be published in a book “Integrated chemical processes in liquid multiphase systems”, by De Gruyter.

The book covers the fundamentals of the thermodynamics of multiphase systems, kinetic modeling and modelling of mass transfer in multiphase systems. It is discussed how three types of phase systems, thermomorphic multiphase systems, microemulsion systems and pickering emulsions can be characterized and which aspects have to be considered for process design. Tools for process systems engineering including modelling and simulation,

process optimization and model-based monitoring are presented and in the final chapter, the integration of the tools into a comprehensive design methodology is presented.

The results of the research projects within InPROMPT will be published in a book “Integrated chemical processes in liquid multiphase systems”, by De Gruyter.

The book covers the fundamentals of the thermodynamics of multiphase systems, kinetic modeling and modelling of mass transfer in multiphase systems. It is discussed how three types of phase systems, thermomorphic multiphase systems, microemulsion systems and pickering emulsions can be characterized and which aspects have to be considered for process design. Tools for process systems engineering including modelling and simulation,

process optimization and model-based monitoring are presented and in the final chapter, the integration of the tools into a comprehensive design methodology is presented.

The dyn group contributed sections on surrogate modeling of thermodynamic equilibria, optimization under uncertainty in process development, iterative real-time optimization applied to the hydroformylation of 1-dodecene in a TMS-system on miniplant scale, and on the integrated model-based process design methodology.

A final colloquium of the Transregio is planned for March 31 – April 1, 2022 at DECHEMA, Frankfurt. We would like to thank all colleagues from the Transrigio SFB for the pleasant and productive collaboration!

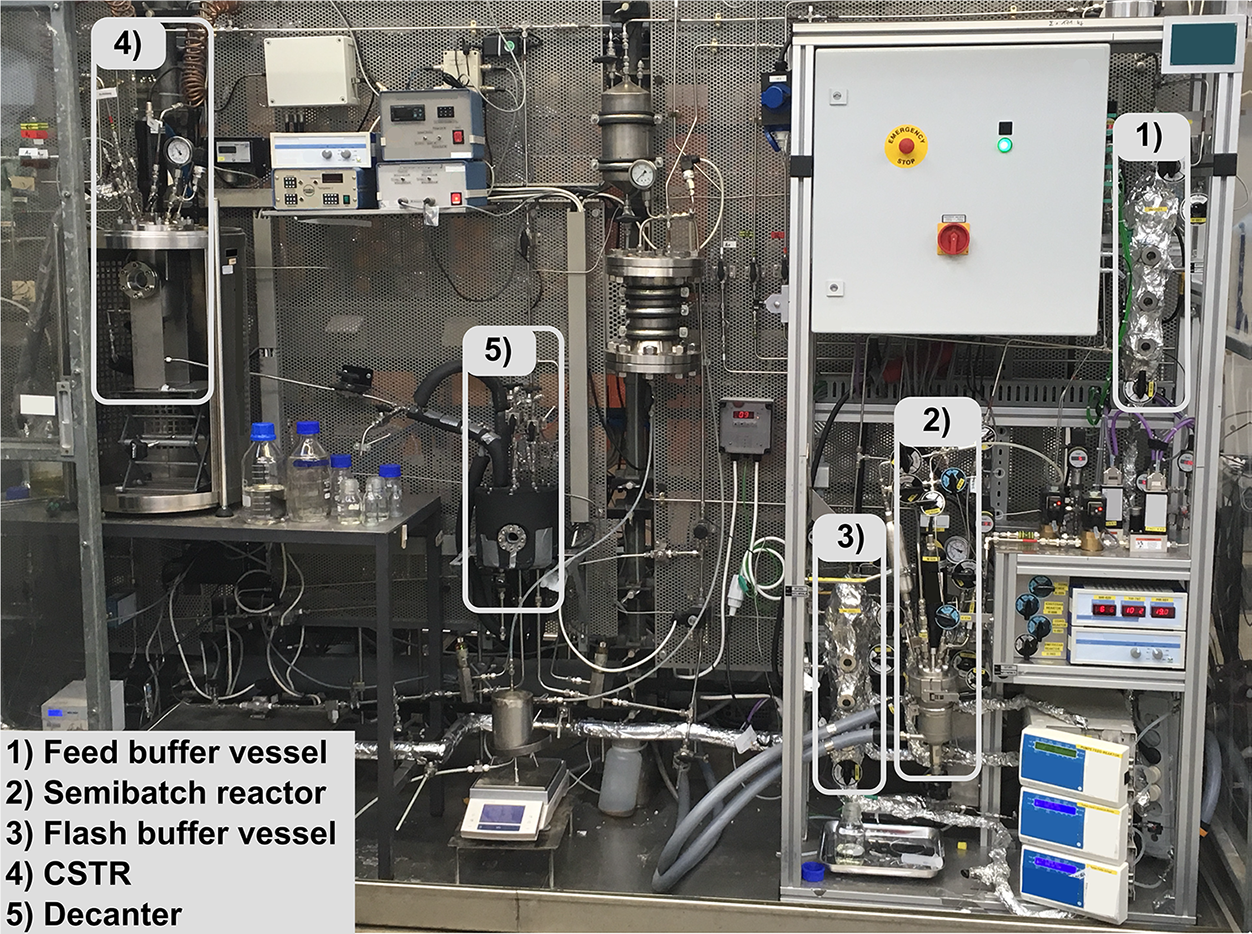

Image of the miniplant with highlighted process units.

Image of the miniplant with highlighted process units.

SIMPLIFY - Sonication and microwave processing of material feedstock

The SIMPLIFY project is an EU Innovation Action aiming at the electrification of the chemical industry.

Until April 2023, a consortium of 11 European organizations including the dyn group will focus on the development of flexible electrified continuous processes for three industrial case studies.

The SIMPLIFY project is an EU Innovation Action aiming at the electrification of the chemical industry.

Until April 2023, a consortium of 11 European organizations including the dyn group will focus on the development of flexible electrified continuous processes for three industrial case studies.

One of the three case studies is the production of polyurethanes which are used as rheology modifiers in water-based paints. These rheology modifiers are conventionally produced in batches of several cubic meters and directly formulated with water within the same vessel. This production process takes several hours per batch. Within the SIMPLIFY project, the transition to a continuous production of these paint thickeners by reactive extrusion on a twin-screw extruder is investigated, which offers numerous advantages. This transition significantly reduces the time and costs associated with cleaning and enables a fully electrified production using renewable energy sources.

The dyn group is contributing to the project by our expertise in the fields of process modelling, automation and control. A twin-screw extruder model has been extended to account for the reactive extrusion process and is combined with a new model of the chemical system based on the experimental work carried out by the project partners. Model based optimization is used for the decision making process of the optimal screw design and the operating region. The experimental validation of the results and process demonstration is carried out atFraunhofer ICT in Pfinztal on an 18mm Leistritz Maxx extruder as shown in Figure 1. In collaboration with Fraunhofer ICT a process automation and measurement concept for this extruder was implemented and validated. This concept includes the control and recording of all process values centrally with a soft PLC using OPC-UA. With this automation system it was possible to demonstrate a stable production of 4kg/h of product over a duration of 8 hours this year. Furthermore, the technical requirements are now met to implement advanced process control in the coming year. Recent theoretical investigations of the application of MAWQA to reactive extrusion processes showed that this control method is well suitable for the reactive extrusion process and offers major economic and ecologic benefits.

Figure 1: Picture of the reactive extrusion setup with temperature, pressure and viscosity measurements (left) and the ultrasound sonotrode (right) realized at Fraunhofer ICT. © Fraunhofer ICT.

Figure 1: Picture of the reactive extrusion setup with temperature, pressure and viscosity measurements (left) and the ultrasound sonotrode (right) realized at Fraunhofer ICT. © Fraunhofer ICT.

Publications

KEEN-TP7: Self-optimizing plants - Dynamic gray-box modeling of fermentation processes

KEEN connects 20 partners, industrial end users, solution providers and scientific institutions with the objective to introduce artificial intelligence (AI) technologies and methods in the process industry and to evaluate and realize their technical, economical, and social potential.

KEEN connects 20 partners, industrial end users, solution providers and scientific institutions with the objective to introduce artificial intelligence (AI) technologies and methods in the process industry and to evaluate and realize their technical, economical, and social potential.

The goal of the work on self-optimizing plants in KEEN is to improve process operations using machine learning (ML) models within advisory systems or in closed-loop control. In this context, a it is crucial to ensure the reliability of the model predictions, especially for applications in feedback control.

So-called gray-box models are a promising direction to increase reliability and interpretability of ML-models. Here, mechanistic model parts are combined with ML-models. Among others, we consider models, where the dynamics are described by mechanistic relations, but some embedded variables are represented by ML-models. An example could be a (bio-) chemical reaction system, where the underlying reactions are known, but the dependency of a reaction rate on temperature or concentration is unknown and modelled using ML-models. Because the ML-model is here embedded into the dynamic equations, it is challenging to choose an appropriate ML-model structure along with its parameters. This is because the dynamic behavior of the system has to be simulated, to evaluate the fitness of one set of model structure and parameters.

We approach this problem in several steps by first estimating what values the ML-models should output to accurately describe the experimental data. This is done by using continuous piece-wise linear functions of time instead of the complex ML-model. This model depends only on the values at the knot points. These knot point values are a lot easier to fit than e.g. a neural net. In the next step, the ML-submodels can be trained using any ML-toolbox, using the values of the piece-wise linear functions as training data. Finally, a full parameter estimation is performed on the basis of a dynamic simulation of the complete model. This procedure is illustrated in Fig. 1.

Figure 1: Steps of decomposed parameter estimation problem for parameterizing dynamic gray-box models with embedded machine learning models

Figure 1: Steps of decomposed parameter estimation problem for parameterizing dynamic gray-box models with embedded machine learning models

We have investigated different algorithmic options and obtained promising results for the fermentation of a sporulating bacterium [1, 2]. The methodology is used on real-world experimental data obtained in pilot-plant scale at Evonik in Hanau.

Figure 2: Picture of the investigated pilot plant. Image by Air Liquide

Figure 2: Picture of the investigated pilot plant. Image by Air Liquide

In one of the investigated use-cases, the dyn group collaborates with Air Liquide on the optimal control of a pre-reforming process. Air Liquide provides data from a pilot plant located at their “Innovation Campus” in Frankfurt where ML-based control algorithms can also be tested. In an adiabatic pre-reformer, higher hydrocarbons are catalytically converted into H2/Syngas. The pre-reforming reactor is installed in front of the main reformer, improving the overall process in terms of efficiency, catalyst lifetime and feedstock flexibility. The goal in KEEN is to increase the energy and material efficiency and to reduce the green-house emissions by improved process control. By the dyn group, data-based (ML) models of the pilot plant are developed based on plant data of the controlled plant supplied by Air Liquide. The developed models will be used in a model predictive controller. Additionally, a mechanistic model for the process has been designed to be used as a benchmark. The solutions will be applied to the pilot plant in the coming years

Publications

OptiProd project

Make-and-pack process plants make up a significant slice of the total of production plants in the process industry. Their competitiveness, productivity, and resource efficiency largely depend on the quality of the production scheduling. Today, production scheduling is often done manually, which is tedious, expensive, and inefficient. For those reasons, there is an increasing industrial drive towards automated and optimal scheduling solutions.

Make-and-pack process plants make up a significant slice of the total of production plants in the process industry. Their competitiveness, productivity, and resource efficiency largely depend on the quality of the production scheduling. Today, production scheduling is often done manually, which is tedious, expensive, and inefficient. For those reasons, there is an increasing industrial drive towards automated and optimal scheduling solutions.

A schematic representation of a two-stage formulation and filling plant that is investigated as a case study in the OptiProd.NRW project, is shown in Figure 1. It consists of a formulation stage, which is decoupled from the downstream filling stage by a set of buffer tanks that are connected through the transfer panel. In addition, the logistics of raw materials and final products as well as the shift schedules of the operators must be considered.

The difficulty of such scheduling problems is the tremendous degree of detail that must be considered to guarantee the feasibility of the plans on the shop floor level. To capture the complex interactions precisely, we make use of the commercial simulation software from INOSIM. It provides a powerful and flexible formalism to capture the features of industrial production processes and can be configured via an easy-to-use interface that enables the design of models without deep knowledge on simulation. The INOSIM software can simulate simple scheduling rules, but an optimization of schedules is not offered.

Figure 1: Schematic representation of the industrial formulation plant.

Figure 1: Schematic representation of the industrial formulation plant.

During the OptiProd.NRW project, an optimization framework [1] is developed to generate schedules automatically. The framework is based upon customizable Evolutionary Algorithms that propose schedules which are evaluated by the INOSIM simulation model and which are iteratively improved based on the simulation results.

The simulation-optimization approach under development will generate schedules that are validated by a very detailed simulation model and optimize the operation of the plant. In 2022, a testing phase with the industrial partner is planned. INOSIM intends to commercialize the project results thereafter.

Publications

HyPro Project

Motivation Blast furnace-based steel-making is the dominant route for the worldwide production of steel which accounts for 70% of the final product.

An industrial blast furnace is a huge gas-solid reactor that typically produces around 4 million tons of liquid iron per year with an annual total CO2 emission of more than 7 million tons.

The stable, economically optimal, and environmental-friendly operation of blast furnaces is a challenge due to the complexity of the multi-phase and multi-scale physical and chemical phenomena,

the lack of direct measurements of key inner variables, and the occurrence of a wide range of unknown disturbances.

Blast furnaces are operated in a semi-automated manner and the quality of the control depends on the skills and dedication of the operators.

The steel industry is strongly interested in better support for the operators or full automation to achieve a more energy-efficient and stable operation of blast furnaces.

A main objective of the HYPRO project is to develop new control strategies that achieve an improved energetic efficiency. In collaboration with partners such as VDEh-Betriebsforschungsinstitut GmbH (BFI) and thyssenkrupp Steel Europe AG, we developed a hybrid dynamic model-based control scheme for achieving the desired operational objectives of a blast furnace.

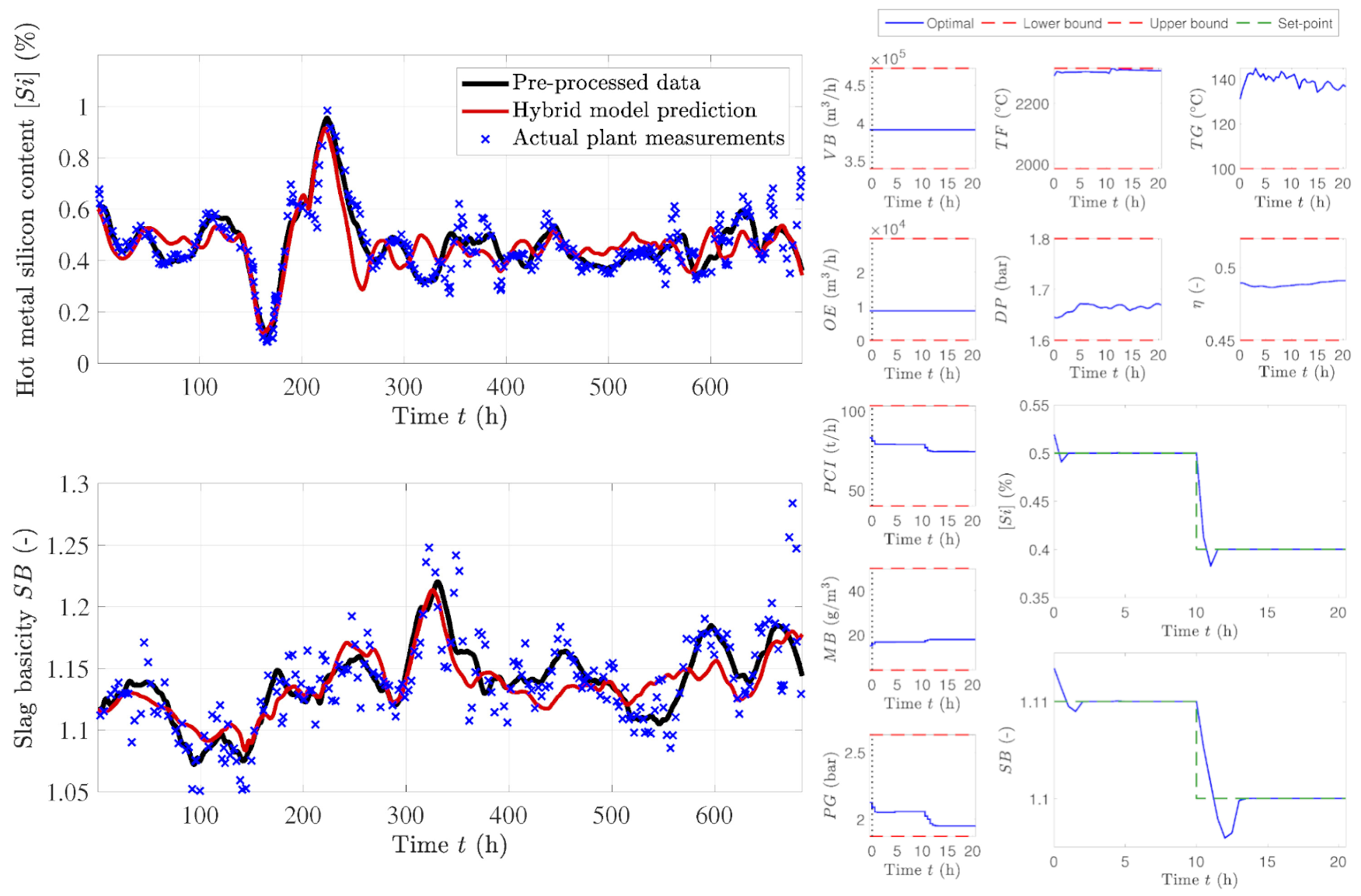

OutcomeWithin the hybrid model, we integrated a first-principles-based model of medium complexity with data-based dynamic neural net model in order to combine the advantages of these two types of models. The hybrid dynamic model provides insights into the blast furnace operation status in terms of thermal status, safety, productivity, and efficiency, by predicting quantities at the furnace boundaries such as the molten iron and slag quality indices and the off-gas analysis parameters (temperature (TG), pressure drop (DP), and efficiency factor (η)). Free run simulation results of the multi-step ahead predictions of the hot metal silicon content ([Si]) and slag basicity (SB) are shown in Fig. 2. Using this model, an optimizing model predictive controller (MPC) was developed for controlling the hot metal silicon content and slag basicity at their desired set-points, subject to operational constraints. This controller regulates the gas phase variables to counteract the process disturbances that are caused by variations in the solid feed. Low values of [Si] and SB lead to improved energy efficiency of the blast furnace process. The simulation results of the control scheme are shown in Fig. 3.

Fig. 2. Multi-step ahead prediction of silicon content ([Si]) and slag basicity (SB) by the hybrid model. Fig. 3. Simulation results of the MPC scheme.

Fig. 2. Multi-step ahead prediction of silicon content ([Si]) and slag basicity (SB) by the hybrid model. Fig. 3. Simulation results of the MPC scheme.

Publications

dyn Members 2021

We all would like to thank our partners, colleagues, students and alumni for their support and the fruitful collaboration all throughout 2021. We wish you a bright, happy and successful year 2021!

Farewell to our colleagues

Fabian Schweers

Fabian Schweers started his research career with the dyn group in 2015. He worked in the EU project CONSENS. Since November 2021, Fabian is employed at BP Gelsenkirchen.

Afaq Ahmad

Afaq did his BSc in electrical and electronic engineering, focusing on automation and control. Later, he completed his master's in automation & robotics in Dortmund. During his master's studies, he focused on process automation. He then started his research career with the dyn group in May 2016 as a Research Associate, where he focused on Real-Time Optimization. He worked in DACH, DAAD-PPP, CoPro, and KEEN projects. Since November 2021, Afaq is employed at the BASF SE in large capital projects.

Jesus David Hernandez Ortiz

Jesus joined the dyn group in early 2017 as a Marie-Curie fellow. His research focused on the modeling and optimization of the electric arc furnace steelmaking process. As a Marie-Curie Early Stage Researcher, Jesus spent most of his time at Acciai Speciali Terni in Italy. Since August 2020, he is employed at BASF SE as E&I engineer.

New dyn Members

Marion Fiebiger

Marion started working as Prof. Engell´s administrative Assistant in April 2021. Getting to know the administrative work for the various research projects of the dyn and PAS group and managing the finances for the chair, she was able to get a position at the Department of Educational Sciences and Psychology of the TU Dortmund. We will miss her and she will keep in good memory the collegial work atmosphere.

Engelbert Pasieka

Engelbert Pasieka studied Chemical Engineering at the TU Dortmund completing both the Bachelor and the Master program. He conducted his master thesis with the title “Metaheuristic Optimal Batch Production Scheduling with Direct and Heuristics-based Encodings” in cooperation with the INOSIM Software GmbH. He joined the group in August 2021 and is currently involved in the OptiProd.NRW Project.

New funded DFG Project

A new project entitled „Safe Reinforcement Learning for Start-up and Operation of Chemical Processes“ has been awarded by the DFG this year. The project is part of the Priority Program 2331 with the title “Machine Learning in Chemical Engineering. Knowledge Meets Data: Interpretability, Extrapolation, Reliability, Trust”. Our project will explore how promising approaches from the field of reinforcement learning can be combined with ideas from robust predictive control to achieve algorithms that can be achieve a safe operation. The algorithms will be demonstrated for the start-up and operation of a complex distillation column, together with our project partners at TU Berlin (Prof. Repke)

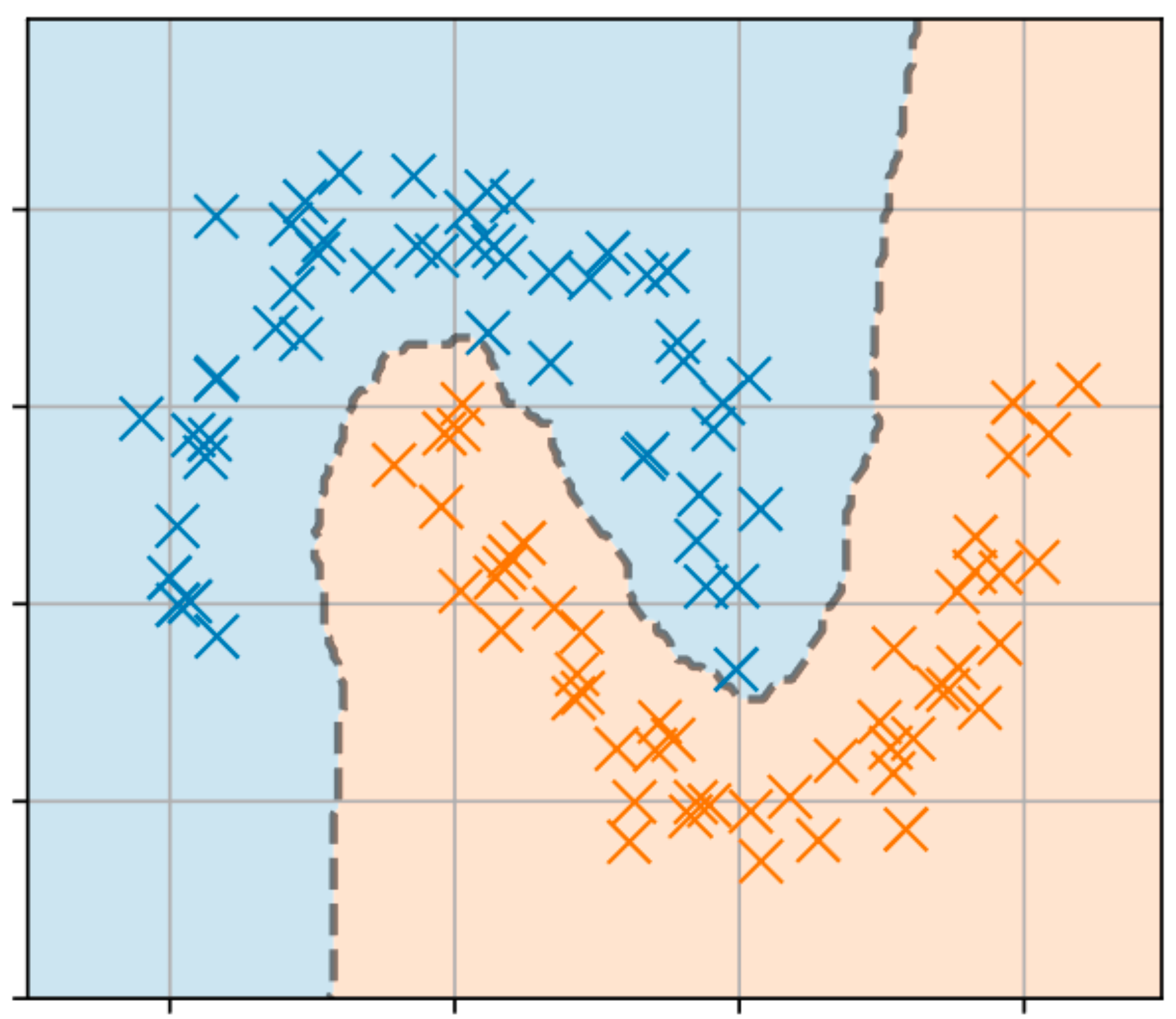

Machine learning methods for engineers

During the summer semester of 2021, we created a new course called “Machine learning methods for engineers”. This lecture is the first one at the Department that is completely focused on machine learning. It covers important basics on probability and optimization as well as efficient implementations and current limitations of machine learning. The course was very well received by the students and will be continued as an elective at the master level.

PAS Members

Lukas Lüken and Moritz Heinlein joined the newly founded Laboratory for Process Automation Systems (PAS) at TU Dortmund this year.

Lukas Lüken

Lukas Lüken received his M.Sc. in Automation Engineering from RWTH Aachen University in January 2021. His master thesis considered the nonlinear model predictive control (NMPC) of a large-scale air separation unit using model reduction techniques and surrogate modeling with neural networks. He started working as a research associate at PAS in February 2021. His research interests are in the conjunction of control engineering, optimization and machine learning. His main focus is on directly incorporating optimization problems such as MPC into end-to-end learning frameworks for efficient and robust learning and control in complex environments. He sincerely thanks his colleagues at DYN and PAS for the warm welcome and looks forward to great learning opportunities

Moritz Heinlein

Moritz Heinlein studied Chemical Engineering in Dortmund from 2015 to 2021 completing both the Bachelor and the Master program. His Bachelor thesis is titled “Theoretische Betrachtung kinetischer Modelle für die Methanchlorierung und Pyrolyse”. He completed his master thesis titled „Comparison of Robust Subspace Predictive Control Methods for non-linear Systems” at the PAS chair. In November 2021, he joined the PAS group as a research associate. Currently, his research focusses on reducing the number of branches in the scenario tree for nonlinear multi-stage MPC by exploiting certain system properties.

Workshop on Robust Model Predictive Control at Uni Freiburg

DFG Workshop Robust Model Predictive Control (2021). The Laboratory of Process Automation Systems (PAS) is meeting the Systems Control and Optimization Laboratory at Universität Freiburg.

DFG Workshop Robust Model Predictive Control (2021). The Laboratory of Process Automation Systems (PAS) is meeting the Systems Control and Optimization Laboratory at Universität Freiburg.

Felix Fiedler, Benjamin Karg, Lukas Lüken and Sergio Lucia from the Laboratory of Process Automation

Systems visit the Systems Control and Optimization (Syscop) Laboratory (Prof. Moritz Diehl) at University of Freiburg for

a Workshop on Robust Model Predictive Control.

The Workshop is part of our ongoing collaborations with Syscop in the context of the DFG project Robust MPC with high-dimensional uncertainty.

During the three day event (21.-23.06.2021) all participants had the opportunity to present their current research in the field of Robust Model Predictive Control.

do-mpc developer conference at TU Dortmund

Prof. Dr.-Ing. Sergio Lucia during the presentation.

Prof. Dr.-Ing. Sergio Lucia during the presentation.

The first do-mpc developer conference was hosted from 13.09.-15.09.2021 at TU Dortmund. Over the course of three days the Laboratory of Process Automation Systems hosted multiple online and offline events for developers, users and supporters do-mpc.

The conference was a great success and we are already looking forward to the next iteration.

pas @ Process Control Conference 2021

M.Sc. Benjamin Karg during the presentation.

M.Sc. Benjamin Karg during the presentation.

From June 1st to 4th, Benjamin Karg represened the pas group at the 23rd International Conference on Process Control in Bratislava, Slovakia. The conference was held in a fully virtual format. pas contributed the following paper to the conference:

pas @ ECC 2021

Fekix Fiedler represented the pas group at the European Control Conference from June 29th to July 2nd. Originally the conference was planned to take place in Rotterdam, Netherlands, but it took place in a virtual form due to the Covid-19 pandemic. He presented the paper:

Fekix Fiedler represented the pas group at the European Control Conference from June 29th to July 2nd. Originally the conference was planned to take place in Rotterdam, Netherlands, but it took place in a virtual form due to the Covid-19 pandemic. He presented the paper:

Publications

Journal Articles 2021

Conference Articles 2021

Theses

Master Theses 2021

Journal Articles 2021

Conference Papers 2021

Conference Presentations 2021

Master Theses 2021

Bachelor Theses 2021

Arnold Eucken Medal for Sebastian Engell

On the occasion of the annual meeting of the ProcessNet-Fachgemeinschaft (ProcessNet professional group)

“Prozess-, Apparate- und Anlagentechnik (Process, Apparatus and Plant Technology)” in 2021, Professor Dr.-Ing. Sebastian Engell was presented with the Arnold Eucken Medal

donated by the German Association for Process Engineering (GVT) in 1956 during the virtual opening and plenary session on November 22, 2021.

Prof. Engell is honored for his outstanding work on dynamics, automation and optimal control of process engineering processes, and he has dedicated his entire professional career to these complex topics. His developments in methods of control engineering and mathematical optimization processes have introduced a paradigm shift in the process management of process engineering processes. With his work, he has made a major contribution to optimization of complex process engineering processes in real time and making them suitable for broad industrial use.

Doctoral degree awarded to Corina Nentwich

The examination committee congratulating Corina Nentwich

The examination committee congratulating Corina Nentwich

On May 19, 2021, Corina Nentwich who had been supervised by Prof. Engell, finished the procedures for her doctoral degree with the oral examination at TU Dortmund University. The examinations took place under Covid-19 conditions with only the examiners

present in a lecture hall and the public attending via Zoom. Thanks to the great preparation by Maximilian Cegla and Tim Janus, the web-based format worked out very well.

Corina Nentwich, a former member of the DYN group at TU Dortmund and currently employed at the Evonik Technology & Infrastructure GmbH obtained the Dr.-Ing degree for her dissertation “Surrogate modeling of phase equilibrium calculations using adaptive sampling”, Congratulations to Corina Nentwich!

Svetlana Klessova received PhD degree in Management Science under co-supervision of Prof. Engell

Svetlana Klessova after the PhD defense with her two supervisors (left and right) and the President of the PhD jury, Dr. Amel Attour (in black)

Svetlana Klessova after the PhD defense with her two supervisors (left and right) and the President of the PhD jury, Dr. Amel Attour (in black)

On July 12, 2021, Svetlana Klessova, who was involved in management and impact maximisation activities in several of our EU projects in the past, obtained a PhD in Management Science from Université Côte d’Azur, France.

The title of her thesis is: “How to improve the performance of collaborative innovation projects: the role of the architecture, of the size, and of the collaboration processes” (Comment améliorer la performance des projets d’innovation collaboratifs – le rôle de l’architecture, de la taille et des processus de collaboration).

The dissertation was supervised by Prof. Catherine Thomas, GREDEC, Université Côte d’Azur, and by Prof. Engell.

She did the PhD research besides her professional work at an innovation management company in Sophia Antipolis.

International Summer Program ISP 2021

Last year, our annual International Summer Program could not take place due to the Covid-19 pandemic.

This year, 10 students from our overseas partner universities in Asia and America were welcomed to Dortmund in the 1st virtual edition International Summer Program.

The ISP organized by the dyn chair in close collaboration with the faculty of American Studies and the International Office, has provided the students with both, an interesting learning environment for various academic subjects and a balanced cultural program.

While everyone expressed his or her regrets that traveling to Germany was not possible, participants have given the program best grades for the effort that has been invested which underlines the acceptance and the value of the program for the international activities of the faculty and of the university.

Last year, our annual International Summer Program could not take place due to the Covid-19 pandemic.

This year, 10 students from our overseas partner universities in Asia and America were welcomed to Dortmund in the 1st virtual edition International Summer Program.

The ISP organized by the dyn chair in close collaboration with the faculty of American Studies and the International Office, has provided the students with both, an interesting learning environment for various academic subjects and a balanced cultural program.

While everyone expressed his or her regrets that traveling to Germany was not possible, participants have given the program best grades for the effort that has been invested which underlines the acceptance and the value of the program for the international activities of the faculty and of the university.

dyn @ ESCAPE31

From June 6th to June 9th, the dyn members Robin Semrau, Filippo Tamagnini, Stefanie Kaiser and Joschka Winz represented the group in the 31st European Symposium on Computer-Aided Process Engineering (ESCAPE-31).

ESCAPE31 was planned to take place in Istanbul, Turkey. However, due to the pandemic ESCAPE31 took place in a virtual format. The followong contributions were presented at the conference:

From June 6th to June 9th, the dyn members Robin Semrau, Filippo Tamagnini, Stefanie Kaiser and Joschka Winz represented the group in the 31st European Symposium on Computer-Aided Process Engineering (ESCAPE-31).

ESCAPE31 was planned to take place in Istanbul, Turkey. However, due to the pandemic ESCAPE31 took place in a virtual format. The followong contributions were presented at the conference:

dyn @ ADCHEM 2021

The 11th Symposium on Advanced Control of Chemical Processes (IFAC ADCHEM2021), was held from June 13 to June 16, 2021. In view of the current COVID-19 pandemic,

it was decided to move the Conference to a pure virtual format.

Many current and former group members represented the dyn. The followong contributions were presented at the conference:

The 11th Symposium on Advanced Control of Chemical Processes (IFAC ADCHEM2021), was held from June 13 to June 16, 2021. In view of the current COVID-19 pandemic,

it was decided to move the Conference to a pure virtual format.

Many current and former group members represented the dyn. The followong contributions were presented at the conference:

dyn @ ECC 2021

Taher Ebrahim represented the dyn group at the European Control Conference from June 29th to July 2nd. Originally the conference was planned to take place in Rotterdam, Netherlands, but it took place in a virtual form due to the Covid-19 pandemic. He represented the paper:

Taher Ebrahim represented the dyn group at the European Control Conference from June 29th to July 2nd. Originally the conference was planned to take place in Rotterdam, Netherlands, but it took place in a virtual form due to the Covid-19 pandemic. He represented the paper:

dyn @ CPAIOR 2021

In the hot midsummer of Vienna, the 18th international conference on the integration of constraint programming, artificial intelligence and operations research (CPAIOR2021)

took place at TU Wien as a hybrid conference with around 20 participants that were present in person.

Interesting interdisciplinary presentations ranging from purely theoretical contributions to industry-applicable

solutions in the fields of scheduling, machine learning, constraint satisfaction and many more made this event special.

dyn @ GECCO'21

From July 10th to 14th, Christian Klanke represened the dyn group at the conference of the Genetic and Evolutionary Computation Conference Companion (GECCO'21) in Lille, France.

The conference was held in a fully virtual format.

From July 10th to 14th, Christian Klanke represened the dyn group at the conference of the Genetic and Evolutionary Computation Conference Companion (GECCO'21) in Lille, France.

The conference was held in a fully virtual format.

dyn@ ECCE/ECAB 2021

From September 20th to 23rd, Filippo Tamagnini, Stefanie Kaiser and Joschka Winz represented the dyn group at the 13th European Congress of Chemical Engineering and 6th European Congress of Applied Biotechnology (ECCE/ECAB 2021).

The conference could not take place in Berlin, Germany, but was held in a virtual format. The dyn contributed the following talks to the program:

From September 20th to 23rd, Filippo Tamagnini, Stefanie Kaiser and Joschka Winz represented the dyn group at the 13th European Congress of Chemical Engineering and 6th European Congress of Applied Biotechnology (ECCE/ECAB 2021).

The conference could not take place in Berlin, Germany, but was held in a virtual format. The dyn contributed the following talks to the program:

dyn @ CDC 2021

From December 13th to 17th, Sankaranarayanan Subramanian attended the 60th Conference on Decision and Control. The conference was planned to take place in Austin, Texas, USA, but as most of the other conferences this year, the CDC2021 took place in a fully virtual format. Mr. Subramanian presented the following journal article:

dyn @ PAAT 2021

On November 22nd and 23rd, Joschka Winz, Maxmilian Cegla represented the dyn group at the PAAT2021 (Jahrestreffen der ProcessNet-Fachgemeinschaften “Prozess-, Apparate- und Anlagentechnik”). Due to the Covid-19 situation, it was organized as an online event. Chemical engineers, plant constructors, process engineers, and technical chemists from science and industry had the opportunity to present research results, discuss requirements from industrial practice, and jointly develop solutions for new processes in the chemical process industries and other sectors. The dyn contributed the following talks to the program: